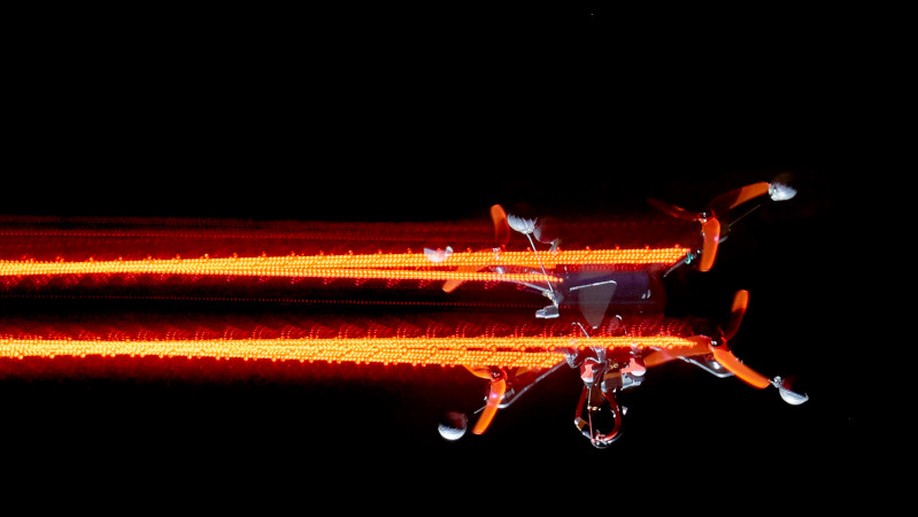

Ever since I played with Lego as a little child, I’ve been fascinated with automation, robotics, and teaching machines to be useful in general. Nowadays, I would summarize my passion as the science of efficient machine-comprehensible problem formulation, which I try to apply to estimation and control problems on autonomous drones.

Interests

- Optimal Estimation and Control

- Robotic Systems

- c++ Programming

Education

-

PhD in Robotics, 2017 - 2021

Robotics & Perception Group

-

MSc in Mechanical Engineering, 2017

ETH Zurich

-

BSc in Mechanical Engineering, 2015

ETH Zurich